Recently, GPT-4o was released, and its real-time effects in real-time speech translation, solving mathematical problems, and other scenarios are impressive. In these real-time interactive scenarios, it is crucial to integrate real-time communication (RTC) technology. RTC ensures that the large language model (LLM) can receive and process user data with minimal delay, thereby providing a seamless and efficient user experience.

Why Does GPT-4o Need RTC?

Superior Real-Time Performance 💨

In the RTC application of GPT-4o, particularly in input scenarios, the LLM needs to receive user video data in real-time. Since the speed at which humans generate content cannot be accelerated, RTC is seen as an essential solution to reduce latency by 100-200 milliseconds.

Weak Network Resistance Strategies 🛜

When network conditions are good, the latency difference is minimal. However, under poor network conditions, the latency difference can significantly increase. For example, when a data packet is lost, the round-trip delay can increase by 200 milliseconds, and if another packet is lost, the delay can increase by another 20 milliseconds. TCP (Transmission Control Protocol) accumulates the previous delays, leading to an increase in total delay. RTC employs various weak network resistance strategies to address poor network conditions, including retransmission strategies and voice prediction completion techniques. These strategies ensure stability and smoothness in weak network environments.

Ability to Handle Interruptions 🤚

RTC is particularly well-suited for handling interruption scenarios, enabling smooth interaction in real-time communication. Streaming transmission is essential for such scenarios, while CDN (Content Delivery Network) is not suitable for handling interruptions.

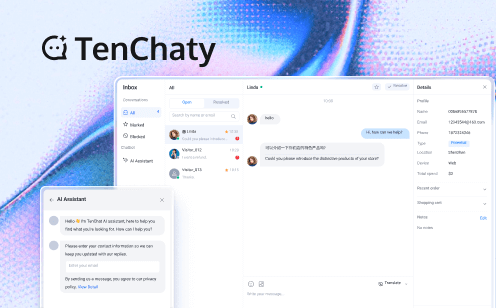

Tencent Conversational AI Solution

Due to RTC's unique advantages in real-time, weak network resistance and interruption handling, Tencent RTC provides a full range of Conversational AI solutions, covering a wide range of applications from call centers, online education to social entertainment and productivity tools.

Conversational AI Solution Architecture Diagram

1. Audio Receives and Publish 🔊

The Client Application captures audio through the RTC Engine SDK and publishes it to Tencent RTC Cloud. After receiving the audio, Tencent RTC Cloud sends it to Tencent RTC AI Service for processing.

2. Speech Processing and Feedback 💬

ASR (Automatic Speech Recognition) converts the audio into text. The text is then processed through Sentiment Analysis and Interruption Handling. The processed text is further understood and generated by LLM (Large Language Model), which combines RAG (Retrieval-Augmented Generation) / Customer's Knowledge Base to provide precise responses. Finally, the generated text is converted back into speech through the TTS (Text-to-Speech) module and published back to the client application.

Conversational AI in Different Scenarios

Online Education 📚

Real-time online education scenarios such as online problem solving and language teaching deserve attention. Problem solving is a highly personalized process, which also includes the use of question banks. Combined with the improvement of RAG and model capabilities, coupled with the real-time effect of RTC, the teaching assistance capabilities in the field of online education will be greatly improved. Compared with the process of users uploading questions and waiting for answers, the scenarios of real-time tutoring and learning companions are more advanced.

Integrating voice capabilities allows for the creation of virtual teaching assistants that mimic real-time human interaction, providing personalized instruction and responsive feedback within educational scenarios.

Social Entertainment 💃

It can be said that the fastest and most direct scenario is companionship (Virtual Companion), because companionship has low requirements for planning and RAG and can quickly reduce latency. It only requires defining the character background and timbre, and the virtual companion is very suitable for application in end-to-end scenario.

Whether it's a Virtual AI Companion, Character AI Dialogue, Interactive Game, or Metaverse application, Tencent RTC solutions use real-time interaction capabilities to understand user intentions and provide tailored feedback.

Call Center 💁

Scenarios such as Customer Service have higher requirements for Planning and RAG, and have a certain delay compared to companionship. Because in such scenarios, the delay is not mainly determined by end-to-end and First Token, but also by the system-level delay of the entire Pipeline. However, if the parallel mechanism and various optimizations are done well, the delay can be reduced to 1-2 seconds.

What's more, scenarios such as AI Sales Consultant, Intelligent Outbound calling, and E-commerce Assistant features leverage RAG and voice interaction technologies to provide a rich, real-time customer service experience. These solutions not only reduce operational costs but also significantly enhance service efficiency, ensuring customers receive the best possible support.

Productivity Tools 💼

Productivity tools such as Voice Search Assistant, Voice Translation Assistant, Schedule Assistant, and Office Assistant are designed to streamline workflows and increase efficiency. By allowing users to command and control applications using their voice, these tools reduce the need for manual input, making daily tasks easier and more efficient.

Medical diagnosis 🧑⚕️

Introducing real-time interaction in the medical field can greatly reduce patient anxiety. A lot of real-time improvements can be made in remote diagnosis and consultation and personalized advice scenarios. For example, in scenarios with frequent and mild diseases (such as children with fever and elderly people falling), there is no need to call a very thick medical dictionary or involve many expert models, so a lot of preprocessing can be done in Planning and RAG, which can compress the model time. A small improvement in the model's latency is conducive to solving the patient's anxiety.

Why Choose Tencent RTC for Conversational AI?

Looking to build a powerful, no-code AI Voice Assistant? Tencent RTC is your ultimate solution! With its unmatched versatility, ease of use, and cutting-edge features, Tencent RTC makes implementing Conversational AI a breeze. Here’s why it stands out:

1. Seamless Integration with Multiple AI Services

Tencent RTC supports integration with a wide range of STT, LLM, and TTS providers, including Azure, Deepgram, OpenAI, DeepSeek, Minimax, Claude, Cartesia, Elevenlabs and more. This flexibility allows you to choose the best services for your specific use case. When the LLM provider chooses OpenAI, any LLM model that provides OpenAI-compatible API endpoints is supported here, including Claude and Google Gemini.

2. No-Code Configuration

Tencent RTC simplifies the setup process with a user-friendly interface, enabling you to Configure Conversational AI in just a few minutes. No extensive coding knowledge is required, making it accessible to everyone.

3. Real-Time Interruption Support

Users can interrupt the AI's response at any time, enhancing the fluidity and naturalness of conversations.

4. Advanced Features

AI Noise Suppression: Ensures clear audio input, even in noisy environments.

Latency Monitoring: Tracks real-time performance to optimize conversation flow, including LLM latency and TTS latency.

Switch Providers on the Fly: Without ending the conversation, you can modify the interruption duration, or switch between different LLM and TTS providers (and voice IDs) to experiment with various configurations.

5. Multi-Platform Integration

If you like, Tencent RTC also supports local development and deployment across Web, iOS, and Android platforms, providing flexibility for diverse applications

Ready to see it in action? Watch this video or follow the tutorial to start building your AI Voice Assistant today!

Why Choose Tencent RTC Conversational AI Solutions?

Deliver Precise and Stable Conversational AI

LLM + RAG integration allows users to upload their own knowledge bases to reduce misinformation and achieve more targeted and stable conversational AI.

Ultra-low Latency Communication

Global end-to-end latency for voice and video transmission between the model and users is less than 300ms, ensuring smooth and uninterrupted communication.

Achieve Natural Dialogue with AI

ASR + TTS technology ensures clear speech recognition and precise text-to-speech output, with a variety of voice options for a personalized communication experience.

Emotional Communication Experience

Sentiment analysis and interruption handling accurately recognize and respond to user emotions, providing an emotionally rich communication experience.

Empower Your AIGC Platforms with Tencent RTC

Tencent RTC's real-time audio and video solutions, combined with LLM, empower your AIGC platforms to deliver exceptional user experiences. Whether it's enhancing customer service in call centers, personalizing education, enriching social entertainment, or boosting productivity, our solutions ensure high-quality interactions with ultra-low latency and precise communication capabilities.

If you have any questions or need assistance, our support team is always ready to help. Please feel free to Contact Us or join us in Telegram.